The Future Doesn’t Care About Your Start-Up- Published on Medium, July 2014

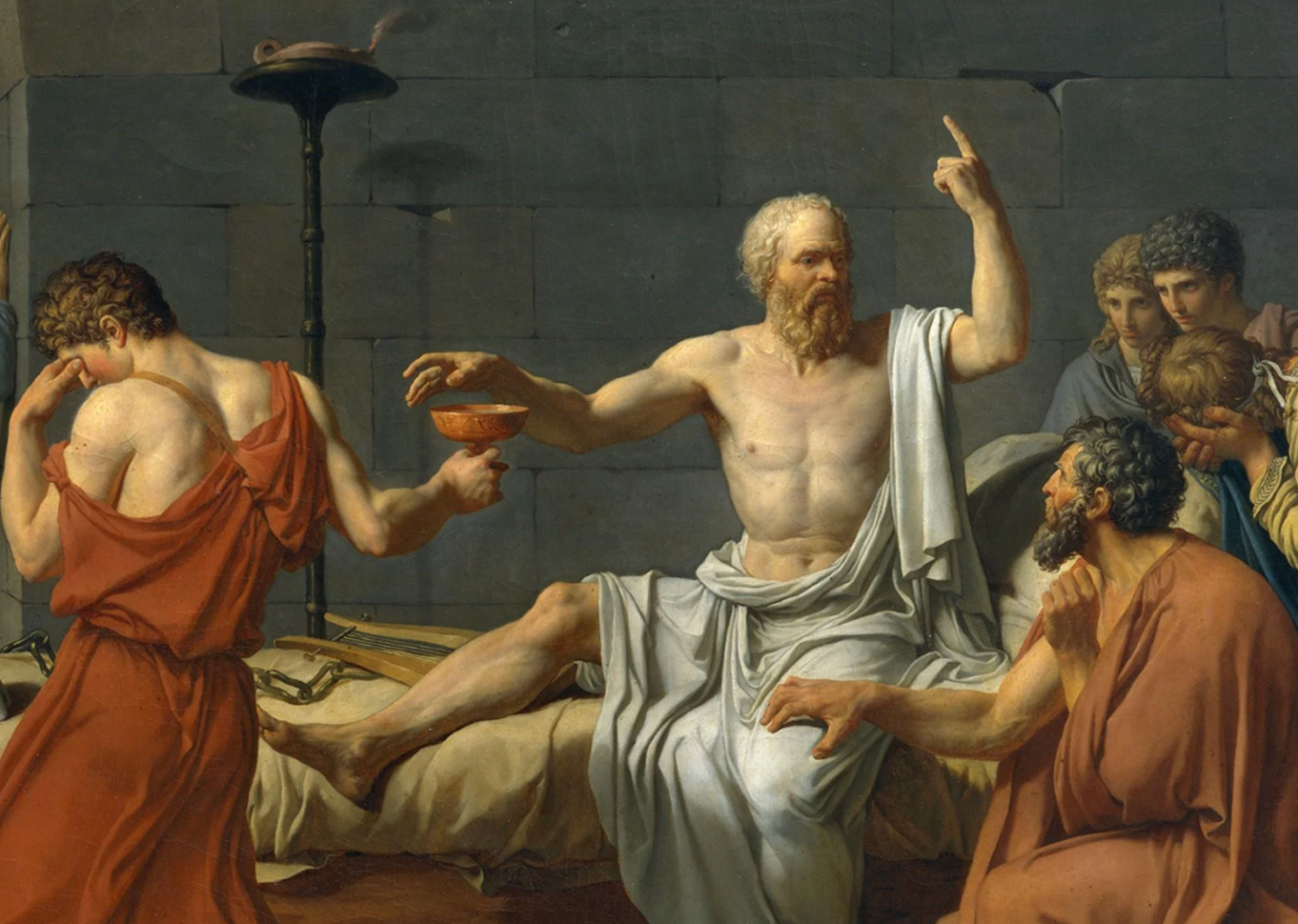

Venture Capitalists of the future need to resemble the Philosopher-Kings of the Past. How do we find a way to ensure and safeguard a positive advancement for humanity whilst fulfilling short-term financial incentives?

Innovation and venture capital in a world of global risks

Throughout a philosophy degree, you’re confronted with the idea of having to build society from scratch, re-creating social, financial, educational and political systems. The archaic web of systems that supports our current arc of history reminds me on a daily basis of the impossibility of ever having such a liberty. Somehow we’re still holding onto models arranged in the early 20th century when our world looked very different. Our educational systems are predominantly the same. We base economic models on the same principles we dreamed up to handle a radically changing world a century ago. Some of us fear it will take a global catastrophe before any real changes will begin. It is the general consensus among existential-risk researchers that a worldwide disaster in the next hundred years might be the accelerator for new governing bodies.

There are lots of reasons to study Philosophy. Often it’s a subject discredited as a choice ‘if you’re not sure what to study’. But in my case I knew it was my future topic since I had been a child. Compelled with a fascination for life, I wanted to understand it- where it came from, what it meant. These questions are by no means specific to or answered by philosophy- they take you through sciences and theology too. A little later in life and the question had graduated to one of asking where life was going- not that I had found the answers for my initial questions. As an adult, philosophy has become less about finding the answers and more about framing the right questions. And those questions turned into- What are the long-term projections for humanity? and How do we ensure and safeguard a positive route to the future?

These aren’t generally the topics of discussion in Silicon Valley (SV), the homeland of technological ideas that last year became my home. The question in SV focuses around the hunt for the ‘Next Big Thing’. Where is it? Who made it? How many users does it have?

Technology has become an inherent part of our lives. Yet the future of humanity rests on it. We need it for global catastrophe mitigation. As much as technology has played a part in climate-change, it will also be the only thing to provide us an exodus from any harm. Global pandemics can only be controlled with the advancement of medicine. An asteroid impact event with Earth can only be avoided with technology. It would be arrogant of ourselves to think that we are special enough to exist through all time. Eventually the human species will most likely be made extinct- an animal that lived in a tiny time-slice of the cosmos, unfortunately burdened with enough intelligence to anticipate its own extinction.

Questions regarding the future of humanity aren’t the sort of topics that are going to be picked up on in Venture Capital brunches on Sand Hill Road. The problem with us constantly thinking in the short-term, with short-term rewards and incentives, is that we line up future generations to be burdened with our disregard for their existence. Long-term thinking should not be limited to the philosophical arena. It’s the role of philosophy now to show that this type of thinking is beneficial to all the areas that make ideas tangible.

Defining Technology- investors are playing with something bigger than they think..

The word technology stems from two Greek words, transliterated techne and logos. Techne means art, skill, craft, or the way, manner, or means by which a thing is gained. Logos means word, the utterance by which inward thought is expressed, a saying, or an expression. So, literally, technology means words or discourse about the way things are gained.

Lately, technology has come to mean something different. In one respect, the term has come to mean something narrower — the above definition would include art or politics, yet even though those activities are permeated by technology now, most of us would not consider them to be examples or subsets of technology. In another respect, this definition is too narrow, for when most of us speak of technology today, we mean more than just discourse about things gained. We can look at it in the following ways:

1. Technology in one aspect is the rational process of creating means to order and transform matter, energy, and information to realize certain valued ends.

2. Technology is the set of means (tools, devices, systems, methods, procedures) created by the technological process. Technological objects range from toothbrushes to transportation systems.

3. Technology is the knowledge that makes the technological process possible. It consists of the facts and procedures necessary to order and manipulate matter, energy, and information, as well as how to discover new means for such transformations.

4. A technology is a subset of related technological objects and knowledge. Computer technology and medical technology are examples of technologies.

5. Finally, technology is the system consisting of the technological process, technological objects, technological knowledge, developers of technological objects, users of technological objects, and the worldview (i.e., the beliefs about things and the value of things that shape how one views the world) that has emerged from and drives the technological process. This is in part what Kevin Kelly refers to as the technium.

For Kelly, the emergent system of the technium — what we often mean by ‘Technology’ with a capital T — has its own inherent agenda and urges, as does any large complex system, indeed, as does life itself. That is, an individual technological organism has one kind of response, but in an ecology comprised of co-evolving species of technology we find an elevated entity — the technium — that behaves very differently from an individual species. The technium is a superorganism of technology. It has its own force that it exerts. That force is part cultural (influenced by and influencing of humans), but it’s also partly non-human, partly indigenous to the physics of technology itself.

“I tend to think of the technium like a child of humanity. Our job will be to train the technium, to imbue it with certain principles because, at a certain level and at a certain age, it will basically become much more autonomous than it is now. It will leave us like a teenager who goes on to live alone: although he or she will continue to interact with us and will always be part of us, we have to let it go.

“We can’t raise a successful human by remaining in complete control as parents. We have to train our children well — bury within them a strong conscience with deep values that can guide them to do the right thing in situations we had not foreseen or even imagined. We need to do the same with the technium and our technologies. In the same sense we need to embed our values into the technological superorganism so that these heuristics become guiding factors. As more autonomy is given and won by the technium, it will then be able to do the right thing.”

– Kevin Kelly, Edge.org

So what do we mean when we credit Silicon Valley as being the homeland of technological ideas? The idea of technology is perhaps more complicated and philosophical than general consensus gives credit for. Most of us can appreciate the first four definitions, but the fifth — encompassing the entire ecosystem of technological progress into a seemingly seventh kingdom of life, places a value that’s beyond our predominantly anthropocentric thinking. We credit technology as helping us get cars on demand or to put music in our pocket- the medium of ideas that make our lives a little easier. But when we start to consider technology in the means of Kelly’s technium, we begin to acknowledge how important it is to ensure that we shape it in the right way, and how current decisions regarding technology are more significant than we may initially consider.

Technologies are made viable not just by those who dream-up the ideas, but by those who fund and support ideas to shape the ecosystem as a whole. With every action that affects technological process, the decision is inherently long-term and philosophical. Venture capitalists who invest in technology are rewarded for having good financial skills, but I contend that they hold a much bigger responsibility in terms of the advancement of humanity than returns for their Limited Partners.

A range of problem solving

We use the technium to solve problems. In reality there’s a whole scale of problem-solving, ranging from the superfluous (getting snacks delivered to the office) to those that, as mentioned before, simply make our lives easier (on-demand car services). But the problem-scale continues right through to global catastrophes (climate, aging) all the way up to existential risk — problems that could cause the extinction of humanity (nuclear warfare, climate, pandemic, asteroid impact). The average entrepreneur sees the scale from superfluous to global catastrophe and looks for viability and market opportunity for an area they want to fix. Market-size is subjective to audience especially in terms of necessity and desire. It may seem there will be more people who want you to deliver snacks to their office than discuss the probabilities surrounding existential risk and even still somehow attempt to mitigate them.

A lot of us know about existential risks and yet the most frequent response on the topic is that, well- if it was really an issue, somebody would be doing something about it. The trouble is that there is no glory in heroic preventative measures. The physicist Max Tegmark, who is currently heading up the Future of Life Institute, pointed out that more people know Justin Bieber than know Vasili Arkhipov—a Soviet naval officer who is credited with single-handedly preventing thermonuclear war during the Cuban Missile Crisis. No one celebrates the government, the individual, or the investor, who prevents a disaster, because we can’t comprehend the alternate outcome. And it’s near impossible for us to comprehend the scale of catastrophes. Life-extension activists will remind you that over 100,000 people die every day of aging-related causes, but those numbers won’t create 100,000 x the compassion in you as when a family relative lies on their deathbed, weakened from years of steady decline.

By tackling issues at the lower end of the problem scale, quite obviously- entrepreneurs and investors minimize the difficulty in viability of a project. A snack-delivering company has a much clearer viability than a biotech company attempting to solve a poorly understood medical issue and then go on to tackle the FDA/NIH/Pharmaceutical Industry, or a clean tech company that requires innovation in different areas for their product to succeed. It is always going to be harder, more challenging and less instantly rewarding to tackle the larger issues. But if you head to NASA Ames Research Park in California, Singularity University promotes young entrepreneurs to create companies that have the potential of benefitting 1 billion people within 10 years. It’s one of the rare programs and incubators that specifically acknowledges and targets the heavier end of the problem scale. The issue with entrepreneurs and investors constantly targeting low-innovation problems is that the ecosystem then inflates the value of this type of problem solving. It’s a herd mentality towards safety, encouraged by the successes of low innovation products (Instagram, Snapchat).

How did Venture Capital end up supporting low-innovation?

At the beginning of the digital era, in the 70s/80s when investments went into high-innovation, high-risk companies like IBM and Genentech, the ecosystem focused around bold innovation. This lasted until the 90s dot-com bubble, where entrepreneurs realized technology (aka the Web) could be used to solve easier problems and go public or be acquired by a larger company for financial reward. For all sorts of reasons the whole thing infamously crashed. And so, for the last 15 years or so, the ecosystem has been in a state of ambiguity- still holding onto the 90s enthusiasm and hope- whist at the same time trying to carve itself into something to serve the 21st century. By no means has it reached its goal — meaning that these are formative years for investors to set the tone for decades to come.

The choices that are being made in technological investments are shaping the technium. The start-up ecosystem represents more than just company-formations and dollar-bills. It represents human interaction with Technology itself. It represents our comprehension of its past and current state, as well as the power for the future. By undervaluing the technium and Technology, we undermine ourselves and our own future.

Whatever ideas we have- we need technology to fabricate them and capital to fund them. Emerging technologies such as the NBICs (nano/bio/informative/cognitive) bring philosophy alive. Bertrand Russell stated in the History of Western Philosophy that philosophy was the ‘no- man’s land between religion and science’. As less people turn to religion to find answers, philosophy becomes an increasingly important subject. Technology may help us find certain answers, but philosophers need to frame the questions and advise decision-makers on how best to guide the technium.

Let’s not discredit the tech ecosystem too much...

For sure there are plenty of start-ups tackling big issues. Clean tech is a huge industry tackling global catastrophe and the potential existential risks from climate change. For innovation trends, Google sets the tone with their hires- the value of AI/AGI- Geoffrey Hinton for Machine Learning, Ray Kurzweil for Natural Language Processing, as well as promoting life-extension with the team and money behind Calico. There are funds, both in the Bay Area and worldwide, investing in NBIC and emerging technologies. But not as much as there should be. And not specifically in existential-risk mitigation. The problem is that the positive advancement of humanity and the mitigation of existential risks is not valued as a priority, or easy to define as a viable industry or business.

There’s a massive danger in waiting and tackling these issues only when they become necessary. But some of us need to champion the value of putting the safeguards in place before their needs are actualized. The Norwegian Government, showing a rare example of a governing body embracing a view longer than politician term-cycles, have already stored over 2 million seeds, representing all known varieties of the world’s crops, in a bunker on the Arctic Island of Svalbard. But the real value in governments being involved with mitigation strategies around catastrophes is that they allow us also to be innovative about how we want the world to be. And once we start evaluating how we want the world to be, it’s hard to sit back and wait for a global catastrophe to bring that future about. We may want to innovate current social, financial and educational models, but no-one wants to lose 75% of life on this Earth in a bio or nuclear war to achieve it. By studying and exploring existential risks individuals inherently learn long-term thinking. We train our minds to look outside of ourselves and into the role of humanity in the past, present and future of the cosmos.

But at least I’d die a rich man?

The media of the last decade has taught us how easy it is for individuals to dip into the start-up ecosystem and leave with large financial rewards. We read stories of investors who fund early-tech and get 5x, 10x even 20x valuation a few years down the line. In reality, these successes are rare. The Kauffman Foundation published a 52 page paper in 2012 that detailed the metrics of the foundation’s investments into VC funds. The Kauffman Foundation, created to encourage entrepreneurship, has more access to top –tier funds than pretty much anyone. Having invested in over 100 such funds over the last 20 years, the datasets provided in the 2012 paper gave a viewpoint of VC returns from the last two decades from a Limited Partner perspective. The main gist of the paper was this— the positive IRR’s come from funds raised before 1995 that took advantage of the dot-com bubble. The majority of VC funds have not generally been providing good returns on investments for the last two decades.

“During the twelve-year period from 1997 to 2009, there have been only five vintage years in which median VC funds generated IRRs that returned investor capital, let alone doubled it. It’s notable that these poor returns have persisted through several market cycles: the Internet boom and bust, the recovery, and the financial crisis… In eight of the past twelve vintage years, the typical VC fund generated a negative IRR, and for the other four years, barely eked out a positive return”

– Kaufman Foundation report 2012

In the minds of the sharp-suits in the city, VC capital seems attractive and sexy- who doesn’t wish they had signed Zuckerberg those initial cheques? We’re all looking for the big buy-outs, the Instagrams and the Snapchats with the hefty valuations. There’s the attitude of holding onto the dreams of the dot-com bubble, over-investing in early-stage consumer tech companies in the hope that they’ll be the ones that hit big.

But these big-hitters are progressively fewer and far-between, and VC as an asset class is proving itself to be of less value as time goes on. So for as much as one would like to argue that low-risk low-innovation investments are philosophically unsound, the only way to get real change in this field is to show that the low-innovation low-risk trends of the last two decades are also economically unsound. It’s time for change and for new trendsetters.

As much as it seems obvious to some of us that the extension of healthy life-span is critical issue, a group of us came together recently to acknowledge that if we want to truly create a catalyst for support in this area, we need to work on an investment model that supported regenerative-medicine investments, on basis of the potential effect on the investor’s lifespan and the benefit to his other investments. As much as we can morally argue an investment to be a duty, the world is not based on philanthropic models. And there’s not actually an issue with that.

We shouldn’t be hoping for regenerative medicine investments to be made simply out of philanthropy. The link between high innovation, high risk and venture capital is broken, and instead of fighting for other resources, we need to work out how to fix it. If we can prove current trends in venture capital aren’t working well (which we can), then what’s necessary is to combine minds from different backgrounds to work on building new investment structures that not only can provide a better return, but that suit our goals in ensuring a positive advancement for humanity. It’s not just about writing articles, or organizing conferences, or institutes, or research departments, in which we discuss that existential risk is a priority. What we need is new structures and models- mathematicians and economists working with philosophers and venture capitalists to find new ways of tackling finance and innovation. We need to find a way to safeguard humanity whilst still offering short-term financial incentives. For sure, we need innovators in finance more than we need innovation in technology. Perhaps digital currencies like Bitcoin will play a role here.

And how do we do that?

Emerging technologies and highly innovative ideas don’t always have clear M&A paths. It’s more viable that a low-risk consumer-tech start-up is going to find an avenue to be acquired. Pivoting consumer tech can be as simple as changing a few lines of code. There’s not even a comparison to be made in biotechnology research. Get some initial propositions wrong, and you’ve lost millions of dollars in trials.

Technological investments of the last two decades have been focused around volume rather than value, meaning smaller investments have been made over a broad spectrum of companies. It’s risk mitigation in finance and it makes perfect sense as the technological ecosystem has set the goals to be exits. It makes no sense to invest in bold innovation, because bold innovation doesn’t always have an industry leader to acquire it. The route for really bold innovation is often to aim for an IPO. And IPOs aren’t sexy, because they’re rare, complicated and often unachievable.

So do we disregard high innovation because the financial rewards are hard to accomplish? No- we work on creating a reward system for innovation that balances out the risk of investment. We need to innovate innovation-financing. An example of success in this category would be the crowd-funding platforms that have risen in the last few years. Crowd-funding takes advantage of technology to remain outside archaic financial models to help ideas to come to fruition. But crowd-funding is not the only finance innovation we can or will create with technology. By setting a goal for what we want- short-term financial incentives for long-term thinking, we need to bring together economists, philosophers and developers to brainstorm from blank-state perspectives ways to achieve it. Let’s get the drawing board out and rethink capital.

For every emerging technology, there needs to be an independent body evaluating the risk and safeguarding the future before the technology is actualized. These bodies, or others, need to somehow also show governments that it’s in their financial interest to put more attention (and money) into long-term thinking, and into existential risks and global catastrophe mitigation. I believe that the only way existential-risk institutes can really command that kind of political presence is by first becoming invaluable in the private sector.

Technology increases man’s power to do both harm and good

We can’t deny that emerging technologies bring their own negatives. Whether it’s the Unabomber Manifesto or Bill Joy’s infamous Wired article Why the future doesn’t need us, we are constantly reminded that technologies themselves present existential risks.

Let’s consider nanotechnology. The astonishing end point of the 3D printing movement is when we can utilize nanotechnology to have assemblers that construct anything out of anything, providing us with God-like capabilities, or at the very least a literal Midas touch. But an opposite version of assemblers could take anything, or in this case ‘everything’ and regurgitate it into seemingly nothing- the ‘grey goo’ hypothesis. What happens if micro-assembling technology gets in the hands of those who have destructive intentions? Or if the originating design had an intrinsic flaw that set off a series of self-replicating nano-deassemblers? It’s beyond the scope of the most apocalyptic sci-fi scenario, and yet the technology is being developed.

And what happens when synthetic biology becomes available to anyone? Bill Joy and Ray Kurzweil famously came together in 2005 to criticize and call for action against the sequencing of the Spanish Influenza virus that took 20 million lives a century ago. The potential for bio-weapons already surround us. Advances in computational biology will aid us in achieving our healthcare goals but also could significantly harm us. The concern with the bigger issues at the end of the problem scale is that they are problem scales in themselves. There is no existential risk issue around Snapchat.

But the risks around these technologies are not the reason to not fund their development. I am yet to meet (or hear of) someone refusing to give capital to an area on the grounds of risk analysis in terms of the advancement of humanity. Yes, there are lots of people not in the capacity to fund technologies who call for Luddite-style rejection of research, but VC funds who would state that they choose not to invest on these philosophical and ethical grounds, would mean that they inherently value philosophy and ethics in such a way that they would invest in high-innovation or high-risk (financial, not existential) that they agreed with.

There are non-profits tackling existential-risks- the Future of Humanity Institute, the Future of Life Institute, the Centre for Existential Risk. The Machine Intelligence Research Institute in Berkeley CA, directly tackles the issue of existential risk and artificial intelligence. Much of the initial funding to start these non-profits came as philanthropic gestures from notable Venture Capitalists. It shows that investors can both be aware of the positive and negatives around emerging technologies and actively attempt to safeguard the positive.

Call to action

By educating investors we have a chance to shape the technium in a way to provide benefits for both ourselves and future generations. Because the truth is, it doesn’t matter how much inheritance we bestow, how many good deeds we did, how many conversations on the topic we had- in the face of global catastrophes or existential risks everything we’ve ever thought, dreamed or envisioned, every person we’ve ever loved, raised or cared for will face the same fate. With technology we create the power to enhance and safeguard the future of the Earth and all the wonderful biodiversity, all of its history, and all of its potential.

Future generations are not going to thank you for your photo-sharing app. And for those of us that recognize the necessity of change, it’s up to us to deliver for the next generation.